Neural Networks 101

A Beginner-Friendly Breakdown of How Neural Nets Actually Work

Neural networks power everything from image recognition to chatbots, but at their core, they're way simpler than people think. Let's slow it down and unpack how they work with an easy example: choosing white or black text based on a background color.

What Is a Neural Network?

Here's the big picture first: a neural network is just a system that takes numbers in, mixes them through simple math, and spits out a prediction.

Then we'll zoom in on the pieces.

It's built from tiny units called neurons not biological ones, just little math machines.

- They take inputs (like R, G, B values from a color)

- Multiply each by a weight

- Add a bias

- Run the result through a function

- Produce an output

That's it.

One neuron is simple a neural network is just many of them stacked.

What Are Weights?

Weights are the neural net's sliders of importance.

- If a weight is big, the neuron cares a lot about that input.

- If it's small, the neuron basically ignores it.

During training, the network tweaks these weights until it figures out what matters.

What Are Biases?

Biases are the neuron's built-in offset a starting point before any input even shows up.

- They shift the neuron's output up or down.

- They let the network make decisions even when the inputs are weak or zero.

- Without biases, the model would be stuck trying to fit everything around zero, which kills flexibility.

Think of it like this:

Weights decide how much each input matters. Bias gives the neuron a baseline so it can activate even if all inputs are zero.

What Does a Neuron Actually Do?

A neuron does one line of math:

output = activation(w1×R + w2×G + w3×B + bias)

Don't worry if this looks intimidating it's just multiplication and addition dressed up in math notation.

The activation function bends the output into a useful range usually 0 to 1.

It's not smart.

But millions of these tiny decisions build intelligence.

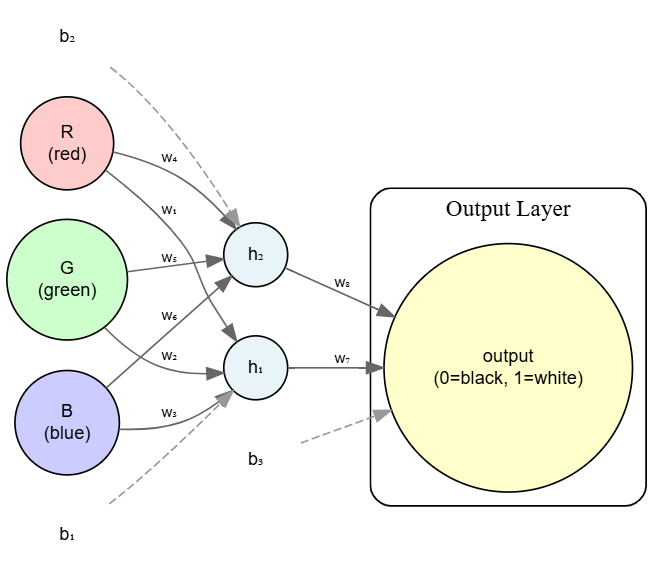

Forward Propagation: The Neural Net "Thinks"

Forward propagation is the moment the network makes a guess.

For our example:

- Input layer takes R, G, B

- Hidden layer learns brightness or contrast

- Output layer returns a number between 0 and 1

- close to 0 → black text

- close to 1 → white text

Simple flow:

inputs → hidden layer → output → prediction

A Simple Neural Network for Text Color Choice

Backpropagation: The Neural Net Learns

After guessing, the network checks how wrong it was.

Then it adjusts itself using backpropagation:

- If a weight pushed the prediction in the wrong direction → lower it

- If it helped → raise it

Think of it like adjusting the volume knobs on a mixer until the song sounds right except the network adjusts thousands of knobs automatically.

This repeats thousands of times.

Slowly, the network reshapes itself into something accurate.

Backprop is the secret sauce it's how the network teaches itself.

The Math You Need

Surprisingly little:

- Basic algebra

- Multiplying numbers

- The idea of "error"

- A soft understanding of derivatives (you don't need to calculate them)

Neural networks feel complex, but the math underneath is straight up lightweight ¬‿¬.

Why Does This Even Work?

Because the network keeps adjusting until it discovers patterns.

For our example, it eventually learns:

- Dark backgrounds → white text

- Light backgrounds → black text

But with a smooth understanding that no human wrote as a rule.

It figured it out from examples alone.

In Short

- Neurons are tiny math units.

- Weights decide what matters.

- Biases give a baseline to work from.

- Forward propagation is guessing.

- Backpropagation is learning.

- Training is repeating this until the guesses stop being bad.

Neural networks aren't magic.

They're just simple functions stacked high enough to look smart.